Is AI Ruining Your Home Theater – Part 2

In my last article on AI, I discussed how AI-generated “news” pieces are increasing in our feeds. They fool the unsuspecting into believing they are well-thought-out and fact-checked articles. But what about the devices in our home theater? The new trend is to slap “AI” onto a product. Hoping the consumer will think it’s better than the competitor. Well hold on as I ask the question, is AI ruining your theater – part 2. Let’s discuss.

What Is AI?

AI was often defined as a computer system capable of performing tasks that only a human can typically do. That definition was applied when we had rudimentary computers doing simple tasks like moving a widget back and forth.

Today, AI describes a wide array of programs used to power many technologies we use regularly. Whereas early CPUs (’80s and ’90s) were big and slow, modern CPUs are far smaller than their predecessors and boast much more speed and capabilities. With multi-core/thread capabilities being built into our gear, those programs and technology can utilize those speed and computing power increases.

Manufacturers love to slap AI on everything! The term is familiar enough to resonate with us. And nebulous enough to fool us into thinking that product is better!

AI In Home Theater Is New?

With all these new stickers and advertisements for AI over our gear, one would be safe in assuming it’s a new technology, right? You’d be wrong. Our equipment has had “AI” since the ’90s, but it was never called that. DSP modes, Dolby upmixing, room correction, and upscaling are just a few examples of hardware and software-driven systems that change the sound or image on your system.

That’s right, folks. When your AV receiver steers sounds meant for surrounds into the other channels, that’s a form of AI using things like phase to trick our ears. When your fancy DVD player upscales your 480i DVD image into a crisp (I use that term loosely) 1080p format, that is a form of hardware AI on the go. No, that kind of AI didn’t need a ton of software or hardware horsepower. It’s a more simple algorithm that was developed by a human and applied to your content. Today, the modern AV receiver can create a virtual room with overheads and phantom channels, and even have video upscaling built in. What a time to be alive.

But How Does AI Ruin My Home Theater?

Some may argue that it doesn’t. They will argue that the chip inside the device “knows best” and that it will optimize the image or sound for the environment. Others will argue that it goes against “the artist’s intent” and that we should turn off all forms of upscaling or AI and let the signal be as pure as possible. Me? I sit squarely in the center, repeating my “it depends” mantra over and over.

When Do I Use AI?

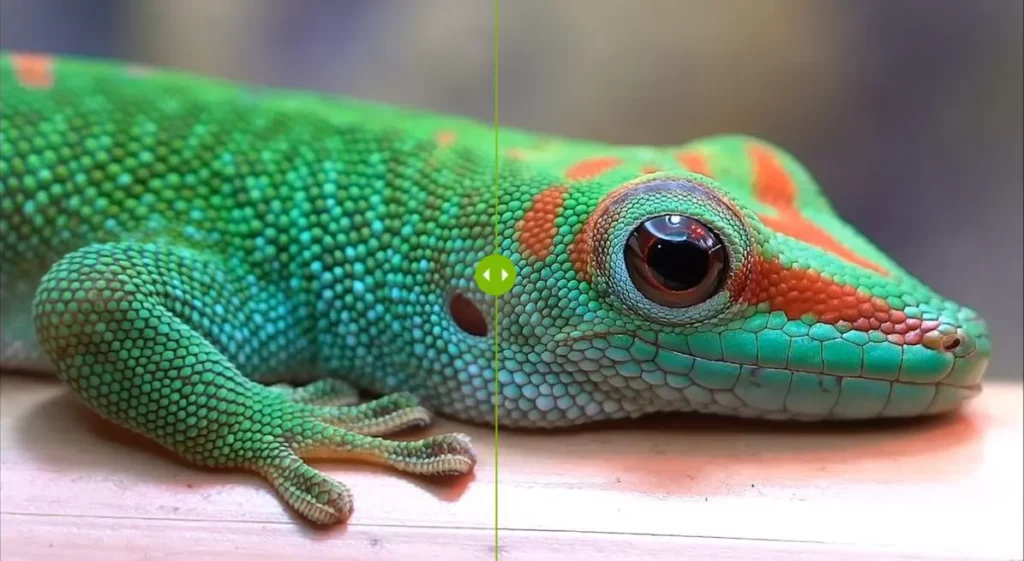

Where it makes sense for me! I am a child of the ’80s. As such, I have collected a lot of DVDs of cartoons I watched as a kid. In most cases, I have ripped them to my Plex server as direct 480p files. Anyone who has watched 480P in a 4:3 aspect ratio on a 4K set can attest to how bad that can be. Because of this, I use the AI upscaling on my NVIDIA Shield to help with the grainy low-resolution video on my 4K TV.

In this case, there is a noticeable improvement in my video. Images are sharper, the text is easier to read, and the overall picture looks less grainy. Before the question is asked, I have turned it on and off to see if there is a difference. Here, the difference is objective and not subjective. You can easily see the difference in the video quality. The subjective aspect is if you like the changes or prefer the original.

AI upscaling is different than hardware upscaling. In hardware upscaling, the device (typically a DVD player) matched the pixel count of the DVD output signal to the physical pixel count on an HDTV with a mathematical equation. AI upscaling uses a neural engine trained on different images to predict what the correct image should look like and fills in those missing details with what the neural engine thinks are the right colors and shapes. In both cases, you are replacing missing information to fill in the missing detail. AI upscaling is more nuanced when compared to the brute force approach of hardware upscaling.

When Do I Turn Off AI?

I draw the line at AI that continually tinkers with the image. Things like brightness, dynamic contrast, and motion smoothing, when adjusted “on the fly,” can be distracting. My LG B9 OLED has several forms of AI built-in that claim to learn your habits and preferences and apply them to all content. I don’t need that; I have picture modes for SD, HD, HDR, and gaming content that adjust these values for me automatically. I don’t need a sensor to gather information about my room and output what it thinks the optimum nits are. Give me all the nits!

It’s the same with audio. I tend to shy away from all the fancy upmixing and simulated surround. If I don’t have a speaker in that location, I don’t want my AV receiver to try and put the right sound there. I have the same attitude with 5.1 movies that get upmixed to 7.1.4. If it’s not mastered in that format, don’t try and fake it. I would rather have a good 5.1 mix than a poorly implemented AI upmix.

I also use only a single source of AI. In my setup, my TV, NVIDIA Shield, and AV receiver all have versions of AI or upscaling. There are no rules on what AI is supposed to do. So each company will apply its own version of what they think is the “best” image or sound, and you may get some weirdness going on. And I’ve watched enough movies to know that turning on all the AI is how the machines become sentient. Do you want to be responsible for a sentient AI that sends cyborgs back in time to hunt down the human resistance? I didn’t think so! So AI responsibly!

Our Take

Like all things in our hobby, you must objectively (and sometimes subjectively) evaluate whether AI enriches or detracts from your experience. Home theater enthusiasts are not your average consumer. My mom prefers all the motion smoothing and vivid color modes, but I do not. That’s not to say that there are not some cases where AI makes the experience better. But it’s up to you to see what you like and don’t like.

If, subjectively, you like some of the changes that AI makes and they don’t align with what the hive mind thinks, who cares? It’s your room, your devices, and your content. You are welcome to consume it any way you like. Just don’t post about it online, lest you be besieged by the pitchfork and torch mob. So to answer my own question: AI ruining your theater – part 2? It depends on how you use it!